Making an important gesture with sign language

There are over 300 sign languages in the world. According to the World Federation of the Deaf, they are the main form of communication for over 70 million deaf people worldwide. They even have their own International Day – 23 September. How different are they from spoken languages and how did they develop?

As old as language itself

The concept of sign language itself is very old. In his dialogue Cratylus, written in around 360 B.C., Plato writes, ‘Suppose that we had no voice or tongue, and wanted to communicate with one another, should we not, like the deaf and dumb, make signs with the hands and head and the rest of the body?’ (translation by Benjamin Jowett).

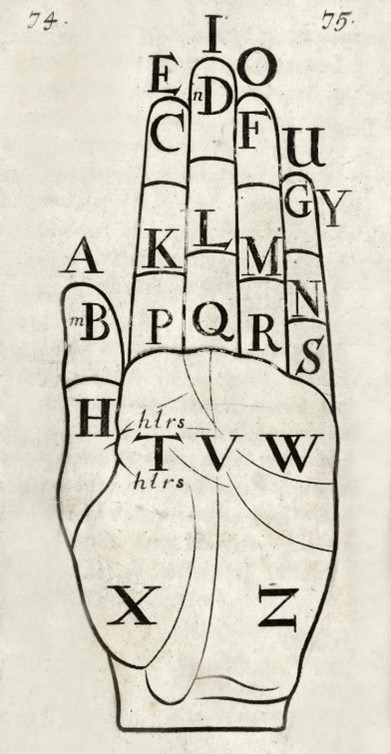

The earliest records of sign language as a codified system date from the 17th century. In 1680, George Dalgano published Didascalocophus, which features a ‘hand map’ to be used for fingerspelling.

Regional variations

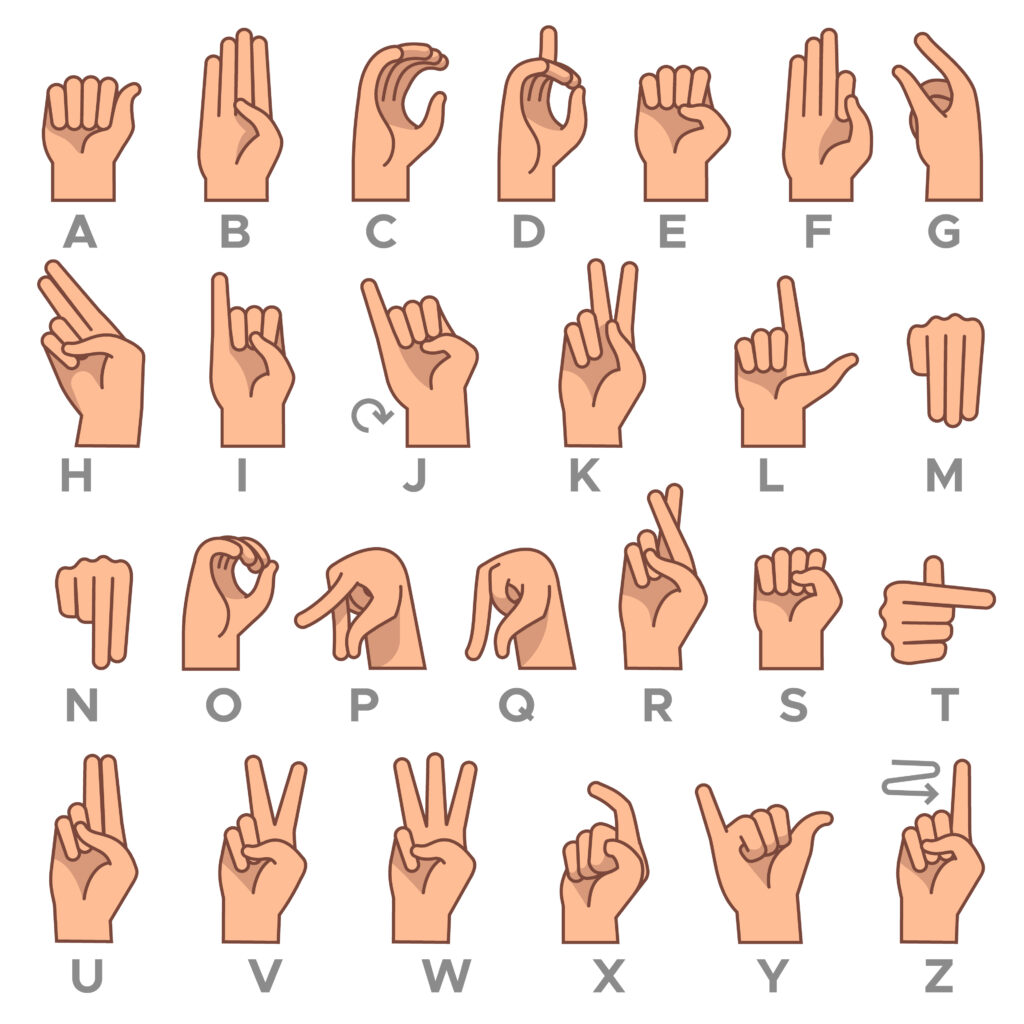

Sign languages developed in a similar way to spoken languages, based on the geographical area where they are used. For example, just as there are many variations of the English language around the world (American English, British English, Australian English and so on), there are different forms of English sign language. Indeed, American Sign Language (ASL) and British Sign Language (BSL) have a lot less in common with each other than you might think: ASL uses only one hand to sign, whereas BSL uses both, and only about 30% of their sign vocabulary is shared between the two languages. The fact that BSL uses both hands for fingerspelling may well derive from Dalgano’s map above, with one hand being used to point to areas on the other, whereas ASL has its own single-hand signs for each letter:

Sign languages born of circumstance

Some sign languages are so-called language isolates, i.e. they do not fall into any larger language families. In the village of Bengkala, in Bali, the percentage of deaf people is considerably higher than the global average, and over the past five generations they have developed their own sign language, called Kata Kolok (literally ‘deaf talk’). Kata Kolok is taught to deaf and hearing people alike (it is currently spoken by about 40 deaf signers and 1,200 hearing signers), with the result that deaf people are fully integrated into the community. Another such language is Al-Sayyid Bedouin Sign Language, used by around 150 deaf and many of the 3,500 hearing members of the Al-Sayyid Bedouin tribe in the Negev desert in southern Israel. ABSL has the interesting feature of breaking down even simple events into each individual action done by each person involved: if you wanted to say that a man threw a ball to a girl, you would sign ‘GIRL STAND; MAN BALL THROW; GIRL CATCH.’

Not just for when you can’t be heard

Not all sign languages are intended to facilitate communication with the deaf and hard of hearing. One in particular, which has largely disappeared with the advent of video and audio technology, is tic-tac. Tic-tac is a system of hand signals used at horse and dog racing events in the UK to communicate betting odds between bookmakers and their staff, in order to prevent one bookmaker’s odds being vastly different from another’s in a way that a cunning punter could exploit. Tic-tac men would wear bright white gloves to ensure that their hand signals could be seen from a distance. It is believed that tic-tac was developed in 1888, but by 1999 there were only three professional tic-tac men still working on British racecourses.

A full range of expression

As Plato predicted, sign language involves communicating with more than just the hands. In ASL, lowered eyebrows are used to indicate a ‘who/when/what/where/why’ question, and raised eyebrows indicate a ‘yes/no’ question. Kazakh-Russian Sign Language, on the other hand, uses raised eyebrows to mean either a general question or surprise, and lowered eyebrows indicate anger. A team of researchers led by Vadim Kimmelman at the University of Bergen uncovered these discrepancies when they published a study in 2020 about using video mapping to translate sign language into spoken text.

Photo: Vadim Kimmelman

The image above was captured using the OpenPose software, which tracks hands, body and facial features automatically in 2D videos and is usually used for motion capture in filmmaking. We are not yet at a stage where this technology could be used to translate from sign language to speech and back again in real time, but it does offer some interesting insights into how sign languages are structured.

The human need for understanding

Sign languages are every bit as diverse and expressively rich as spoken languages, and the fact that they have arisen more or less spontaneously in every country and culture in the world shows that people will always find a way to communicate with each other. Creating an entirely visual language that can be conveyed by nothing other than the human body takes tremendous inventiveness, and yet it comes naturally to people from even a young age. Perhaps this is another argument in favour of Noam Chomsky’s theory of universal grammar, where we all have an innate understanding of the fundamental concepts of language. In any event, sign languages are a testament to our seemingly inexhaustible ability to adapt to our circumstances.

-

Sign language